Whitebox Sympothium on Explainability

2023/05/02

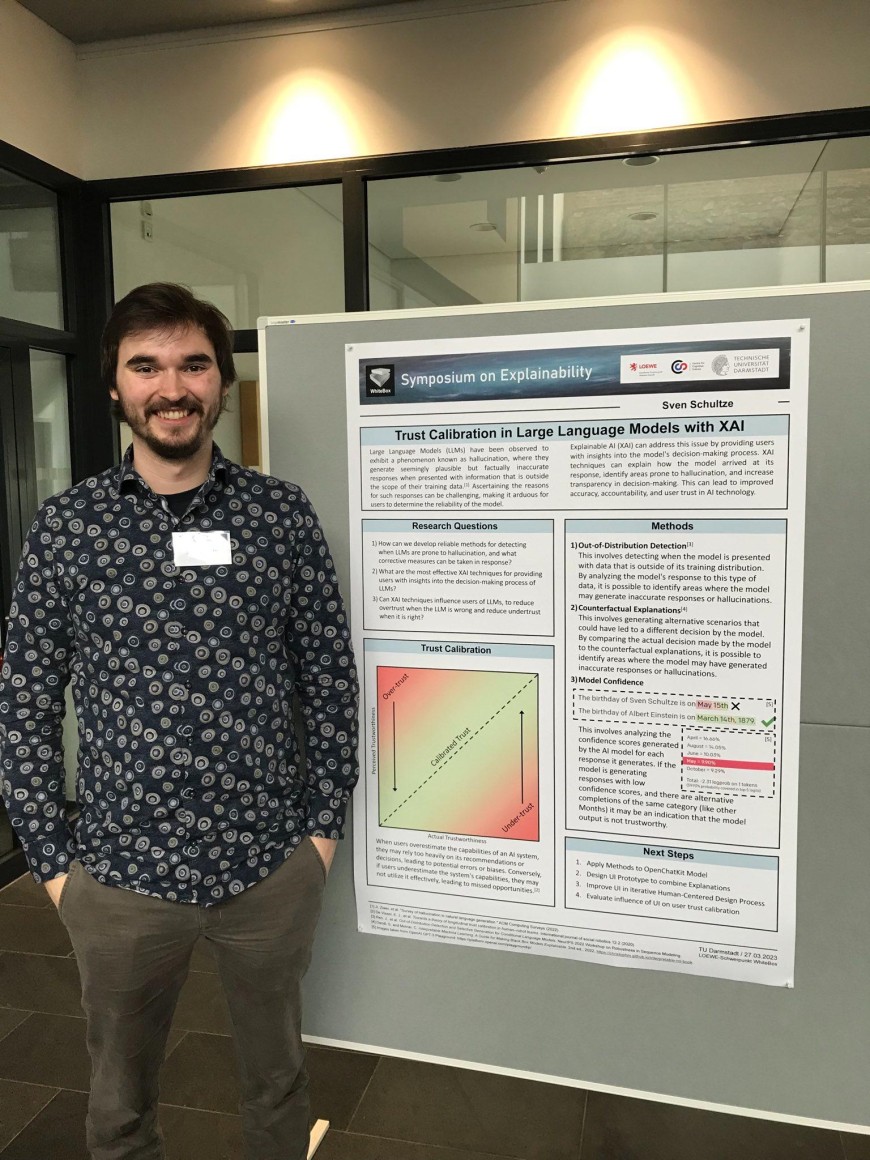

Sven Schultze and Meike Kietzmann attended the WhiteBox Symposium on Explainability and presented their research.

The LOEWE Research project Whitebox investigates the interface between cognitive science and AI methods to make human and artificial intelligence more understandable. Launched in 2021, the interdisciplinary research focuses on the basic hypothesis that explaining an artificial intelligence system is not fundamentally different from the task of explaining intelligent, goal-directed behavior in humans.

The symposium presented papers on current research are from a variety of disciplines. Questions such as “What can explainable AI truly do?”, “What are the limits of explainable AI?”, “What is the role of explainable AI in the responsible development and use of artificial intelligence?”, and “How do humans understand the explanations of an AI?” were addressed.

In a poster session, research associates presented their work and discussed their results and interpretations with the symposium participants.

Special thanks go to Dirk Balfanz, who made this symposium possible.

More information can be found here.